Building neural networks is at the heart of any deep learning technique. Neural networks is a series of forward and backward propagations to train paramters in the model, and it is built on the unit of logistic regression classifiers. This post will expand based on the math of logistic regression to build more advanced neural networks in mathematical terms.

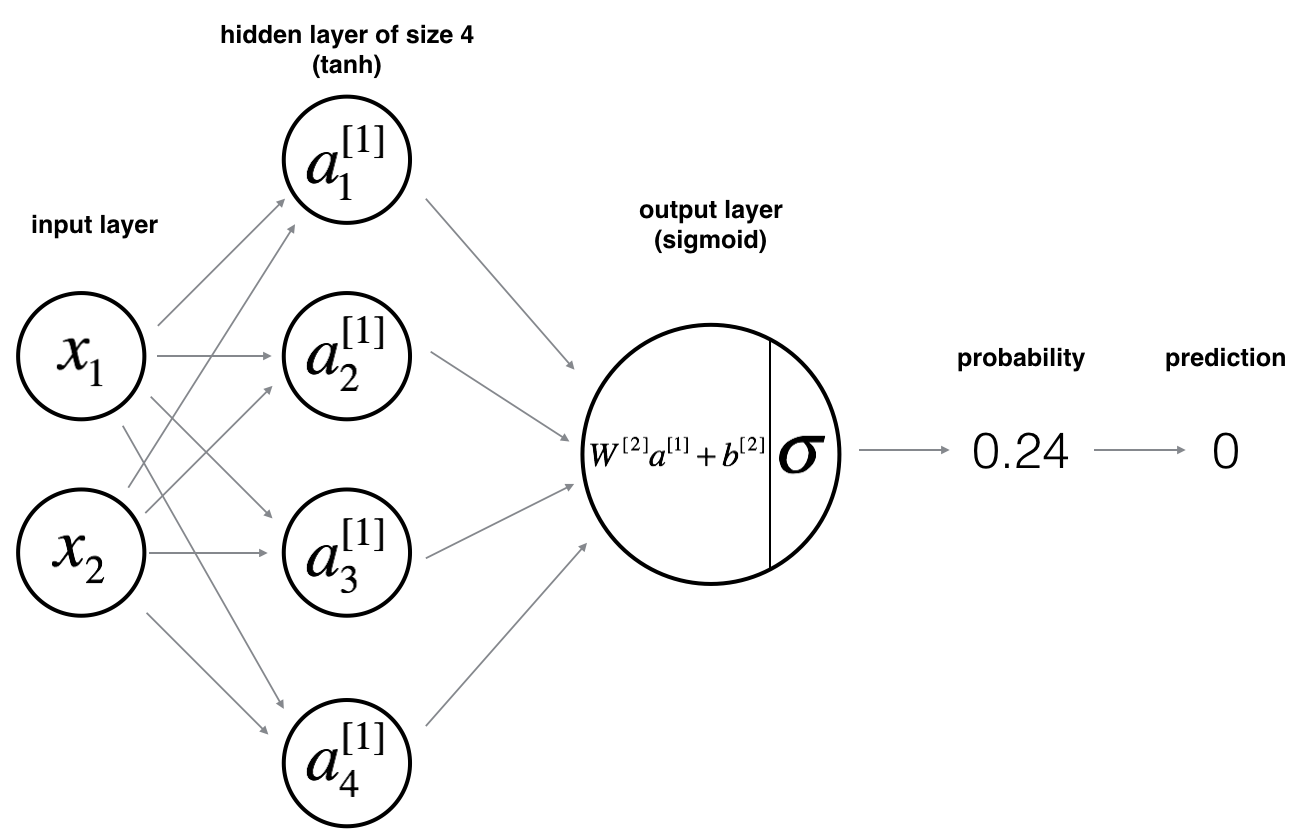

A neural network is composed of layers, and there are three types of layers in a neural network: one input layer, one output layer, and one or many hidden layers. Each layer is built based on the same structure of logistic regression classifier, with a linear transformation and an activation function. Given a fixed set of input layer and output layer, we can build more complex neural network by adding more hidden layers.

Before diving into the details of the mathematical model, we need to have a big picture of the computation. To quote from deeplearning.ai class:

the general methodology to build a Neural Network is to:

Define the neural network structure (number of input units, number of hidden units, etc.)

Initialize the model’s parameters

Loop

- Implement forward propagation

- Compute loss

- Implement backward propagation to get the gradients

- Update parameters (gradients)

To make it easier to understand, we take an iterative approach to break down the math of neural networks, first we analyze a 2-layer neural network, then we analyze L-layer neural network.

Two-layer neural network

Let’s think of the following hypothetical scenario: we have two nodes and for input layer, three nodes defined in the hidden layer, and we have one node for the output layer. Converting the graph below into mathematical terms, we have:

The following is our input parameters where we specify the 2-layer neural network:

- Input layer , with its weight and bias

- Oput layer , with its weight and bias

- Hidden layer

To perform forward propagation, we have the following calculation:

- If then , otherwise .

Given that we have computed , which contains for every example, we can compute the cost function as follows:

Given the loss function, we want to implement the backward propagation starting from back to :

Then we use gradient descent to calculate , and , , with a specified learning rate :

After one iteration of the loop is finished, we then run the model again with the training set, and we expect to see the value of loss function descreases.

L-layer neural network

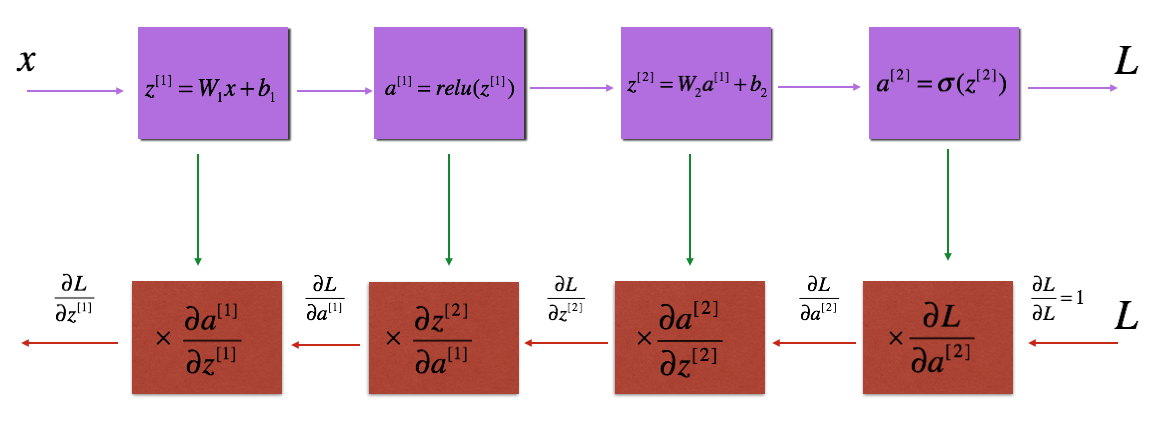

A l-layer neural network follows the same logical loop as the 2-layer neural network, however activation function for the hidden layers is different.

Rather than using as the activation function, in recent years people have started using rectified linear function, ReLU for short. ReLU has two advantages, first is that it is a non-linear function so it provides the similar benefit as other non-linear function such as or . Also, the derivative of ReLU is a constant, making it much faster when calculating the backward propagation step.

In addition, we need to make sure we initialize non-zero values for . if is a vector of zeros, then the forward and backward propagation will effectively update parameters during each iteration, making the model ineffective.

Following the general pattern of building the neural network, we can specify the input parameters in mathmatical terms:

- We have layers with input layer and output layer .

The forward propagation is computed using following equations:

- The first activation layer: ,

- The nth activation layer: ,

- The last activation layer: ,

Next we want to implement the loss function to check if our model is actually learning:

Then we calculate the backward propagation, which follows steps similar to forward propagation:

- linear backward

- linear to activation backward where activation computes the derivative of or activation

- [linear to ] X (L-1) to Linear to backward (whole model)

For layer , the linear part is: (followed by an activation).

Given we have already calculated the derivative . We want to get .

Now that we have , we can update our parameters using gradient descent:

Similar to 2 layer neural network, after one iteration of the loop is finished, we then run the model again with the training set, and we expect to see the value of loss function descreases.